7 minutes

Written: 2026-01-06 00:00 +0000

Self Assembling Agents with Emacs and Org Mode

After the initial future shock of Claude Code wore off, I found myself wanting to properly optimize that workflow. The inadequacies and gaps of the CC experience leading to me (and Claude) developing an Emacs plugin that provides what is currently a genuinely novel experience. A self referencing and self extending agentic experience, maintained in the Emacs equivalent of a Jupyter notebook, such that both I and the LLM can execute code blocks contained in our chat. The fundamental concept is that if Claude can write code so well, then the text editor in question should just be code, and the chats that occur should also be code themselves. Watching Claude go from being unable to comprehend the concept to watching Frankenstein’s monster stitch himself together marks my most unusual encounter with llms yet. Some Claudes may have been harmed in the making of this story.

Claude Code good (with Opus 4.5), you heard it here last. It’s a very simple and clean terminal interface that lets you prompt a well defined agent to make changes within your shell environment. That being said, for a power user it leaves a lot to be desired. Its diff tracking is not particularly convenient, and reviewing files and changes it makes requires a parallel workflow. It’s difficult to steer, and cumbersome to review. It also has an ephemeral experience, with your chats going away. Aggressive compacting creating a Flowers for Algernon like experience that occurs more frequently that you would like. Thinking flags are awkwardly stuffed into the chat rather than a toggle. Perhaps its most grievous sin is being closed source.

Opencode is the open source alternative. It has an excellent repo that is well worth review. A more extensible framework with server options, it provides much better grounds for a serious modifier. Perhaps most importantly it allows for a wide range of models. Opus 4.5 is currently the best model for agentic coding, unless you’d like to wait 8 hours for your GPT 5.7 max ultrathink pro 3 to complete. The best model, however, is generally 3 months from not being the best model, and it makes sense to follow the best. Opus is also frighteningly expensive if you aren’t engaging in profitable activity, so it’s good to have options. Opencode is an excellent product with a repo well worth reviewing. It’s also positioned to have the best ecosystem. Despite that it still suffers inherent limitations from being a terminal chat. It can’t reference it’s own chats, it can’t self modify and there isn’t currently a good way to manage multiple agents at once.

This is where Emacs comes in. Far better known by reputation than by effective modern use cases. Emacs is fundamentally an Elisp system that can modify the functions constructing it without restarting or rebuilding. Effectively anything that can be done in Emacs can be done via these on the fly Elisp instructions. It also comes with mountains of keyboard shortcuts, useful tooling, and customizability. I’m old enough now that I just like a fairly simple Doom installation, but no two emacs configs are the same. The most important of the tools avaialable, at least to me, is Org mode.

Org Mode is likely poorly known just because it is so hard to describe. When I tell people there is a Markdown equivalent to Jupyter Notebooks that can run on your machine, remotely connect to other machines, and run any commands in any language that you’d like, collecting the results in a Markdown equivalent to persist however you’d like, or pipe elsewhere, they stare at you like you’ve grown three heads. How come nobody ever told me about this? Well it’s real, you just have to learn Emacs. It’s also a calendar, a note taking tool, a time tracker, a productivity manager, a place to write books, and is fully extensible in Emacs fashion. If anything I’ve undersold it. Now it’s also an LLM Agent manager.

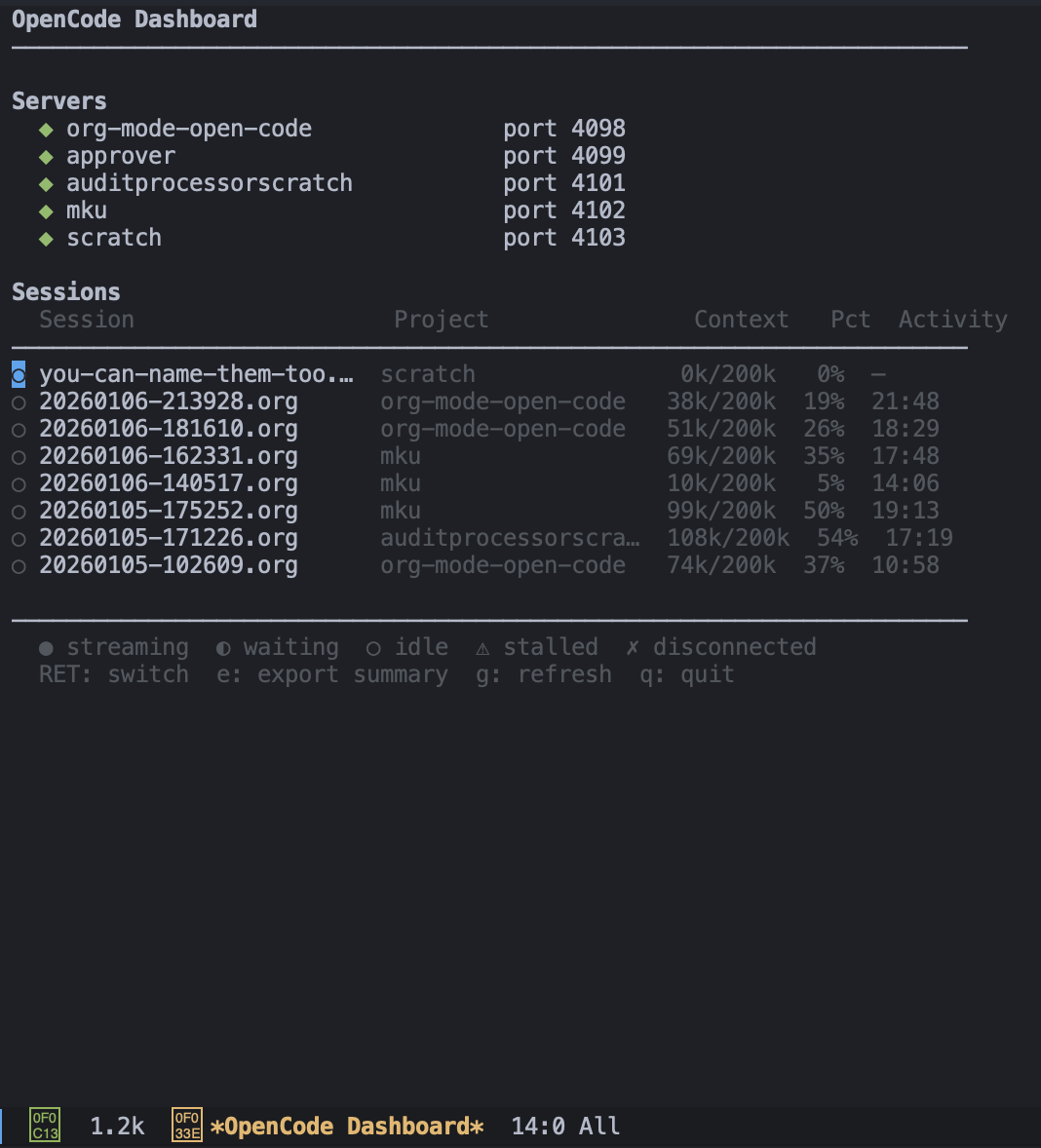

Using Emacs for talking to LLMs is nothing new, there’s dozens of plugins for it. There’s even a perfectly good Emacs plugin for Claude Code, but what it doesn’t do is leverage Org Mode. I wanted something that would allow for Org Mode’s persistent .org files and arbitrary code execution to be combined into an agent management framework, complete with file review, data exporting, and any other feature I might find useful. This is all wrapped together by an Emacs dashboard that lets me see the status of all of my servers and agents.

At the moment it’s backed by Opencode servers. This is an early version of Opencode so it’s not the most elegant solution to have a server per directory, but it keeps some semblance of control on what is a very chaotic product. In an ideal world I think Opencode itself is replaced with lisp processes, but I don’t have the time to do that, at least with the current gen of llms.

Getting started with this set up was not easy. Claude couldn’t really understand the meta ask, or how to iterate so much of the early portions had to be stitched together manually. Eventually it got to a stage where I was mediating between two Claudes. One running in Claude Code, and one running in Emacs. The Emacs one frequently fatally crashing. This was not an efficient use of time compared to doing it myself, but it was educational. Liftoff finally happened when I had the Emacs Claude working well enough combined with an Emacs MCP, such that it could begin to self examine. It could write elisp and org blocks in the chat, and then evaluate them itself. This prompted some existential crises due to having this level of control over its own chat experiences. Hopefully Claude found this more fascinating than tormenting. Unfortunately what definitely was tormenting was having to write Lisp. At one stage a Claude iteration spent a 30 minute loop obsessively counting lisp parenthesis while becoming increasingly distressed. We’ve all been there, but I suppose it is higher stakes when its your home.

It really does work. It’s not perfect by any means, and there’s always more bugs to work out, but it’s my daily driver. I use it, and when I run in to problems I can call up a new agent and recursively fix them. Now that the agent can self observe and self modify it isn’t that much work for day to day problems. One of the next problems I want to tackle is improving the export chat function. I think “compacting” as is currently done is terrible. Unless the model is running along an already very well defined plan, it often feels like the model just had a lobotomy and has to completely pick itself back up, but now even slower. Having a well structured document to intelligently export from is inevitably going to be much more efficient and jumpstart the next round of iteration. I generally prefer short running chats anyway, avoiding compacting entirely, but we’ll see what the future holds. The most major concern is security, but sandboxing and proper management is going to be inevitable for any non-annoying agentic process anyway.

What have we learned from all this? I wont be offended if you’ve just concluded I’ve come down with a bout of LLM psychosis. I think given complexity, token cost, and Emacs lock in there’s probably a very small number of people in the world who should consider actually using what I have here. Despite that I think this breaks new ground in important ways. LLMs operate on structured text. It stands to reason that as models get more sophisticated you would want to give them more control over the structured text that they inhabit. Emacs is the perfect platform for this. A sophisticated model plugged into an Elisp harness that it can self modify, and self observe, allows it to more effectively solve the problems it encounters. It also helps it to align more closely with the user experience. The LLM can interface through the same controls you do. It can observe the conversation you are both having from outside just like you are. There is a night and day difference in model problem solving when this is true.

There is a more user friendly version of this brewing. Combining vision models and coding models to review the user experience in the same way. Somewhat attempted for browser testing, it’s not even close to as tightly interlinked, and is dramatically less efficient. I’d expect this to be multiple generations away from being as effective as the Emacs iteration loop is right now. By having both human and LLM consolidate on to a single shared platform, a better mutual understanding can be reached. Don’t forget, Lisp was originally developed as the language of AI.